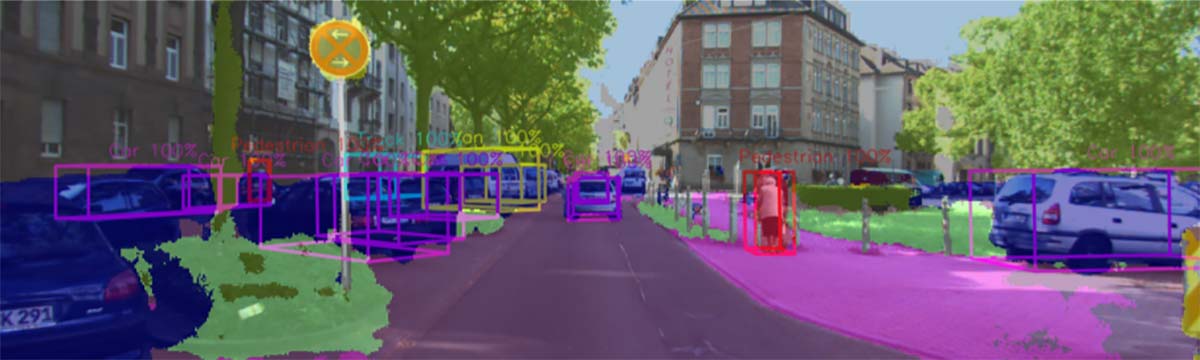

The process of perception in self-driving cars uses a combination of different sensors to perceive and model the environment around the vehicle, in real-time. Perception in self-driving cars is crucial to the following operation modules. For example, actions like breaking are derived from it. Sensors like cameras, radars and LiDARS are used for the environmental modelling around the car. Using and processing this data, software systems based on neural networks act as the ‘brain’ of the vehicle. The related hardware, in the current AI solutions, is based on powerful and highly power-hungry GPUs, which is not suitable for smart sensors or central domain controllers that need to fuse several input streams (need for multiple chips). Therefore, this use case also will permit to investigates how much it is possible to reduce the overall power consumption with neuromorphic chip computing and in parallel keeping the same detection performance and an improved latency as compared to other conventional chips with higher power consumption as mentioned before.

Valeo, in collaboration with CEA and GML, works to demonstrate how neuromorphic chip computing can be applied in the perception stack for autonomous driving using LiDAR and camera neural network tasks on 3D object detection and classification of road users.